Chapter 1. Evolution of the Internet

This chapter covers the following key topics:

- • Origins of the Internet; the Internet

Today

Very brief history of the early Internet, with emphasis on its implementors and users, and on how it has evolved in the last decade.- • Network Access Points

Internet service providers must connect, directly or indirectly, with Network Access Points (NAPs); customers will need to know enough to evaluate how their providers connect to the NAPs.- • Route Arbiter Project

Overview of concepts central to the rest of this book: Route servers and the Routing Arbiter Database. Route servers are architectural components of NAPs, Internet service providers, and other networks.- • Regional Providers

Background on the current Internet layout with respect to regional connection service and goals.- • Network Information Services

Description of information collected and offered by central and distributed Internet information services. Includes description of templates that customers and providers must fill out to get connected. - • Network Access Points

Chapter 1

Evolution

of the Internet

The structure and makeup of the Internet has adapted as the needs of its community have changed. Today's Internet serves the largest and most diverse community of network users in the computing world. A brief chronology and summary of significant components are provided in this chapter to set the stage for understanding the challenges of interfacing the Internet and the steps to build scalable internetworks.

Origins of the Internet

The Internet started as an experiment in the late 1960s by the Advanced Research Projects Agency (ARPA, now called DARPA) of the U.S. Department of Defense. DARPA experimented with the connection of computer networks by giving grants to multiple universities and private companies to get them involved in the research.

In December 1969, the experimental network went online with the connection of a four-node network connected via 56 Kbps circuits. This new technology proved to be highly reliable and led to the creation of two similar military networks, MILNET in the U.S. and MINET in Europe. Thousands of hosts and users subsequently connected their private networks (universities and government) to the ARPANET, thus creating the initial "ARPA Internet." ARPANET had an Acceptable Use Policy (AUP), which prohibited the use of the Internet for commercial use. ARPANET was decommissioned in 1989.

By 1985, the ARPANET was heavily used and congested. In response, the National Science Foundation (NSF) initiated phase one development of the NSFNET. The NSFNET was composed of multiple regional networks and peer networks (such as the NASA Science Network) connected to a major backbone that constituted the core of the overall NSFNET.

In its earliest form, in 1986, the NSFNET created a three-tiered network architecture. The architecture connected campuses and research organizations to regional networks, which in turn connected to a main backbone linking six nationally funded super-computer centers. The original links were 56 Kbps.

The links were upgraded in 1988 to faster T1 (1.544 Mbps) links as a result of the NSFNET 1987 competitive solicitation for a faster network service, awarded to Merit Network, Inc. and its partners MCI, IBM, and the state of Michigan. The NSFNET T1 backbone connected a total of 13 sites that included Merit, BARRNET, MIDnet, Westnet, NorthWestNet, SESQUINET, SURANet, NCAR (National Center of Atmospheric Research), and five NSF supercomputer centers.

In 1990, Merit, IBM, and MCI started a new organization known as Advanced Network and Services (ANS). Merit Network's Internet engineering group provided a policy routing database and routing consultation and management services for the NSFNET, whereas ANS operated the backbone routers and a Network Operation Center (NOC).

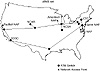

By 1991, data traffic had increased tremendously, which necessitated upgrading the NSFNET's backbone network service to T3 (45 Mbps) links. Figure 1-1 illustrates the original NSFNET with respect to the location of its core and regional backbones.

Figure 1-1 NSFNET-based

Internet environment.

As late as the early 1990s, the NSFNET was still reserved for research and educational applications, and government agency backbones were reserved for mission-oriented purposes. But new pressures were being felt by these and other emerging networks. Different agencies needed to interconnect with one another. Commerical and general-purpose interests were clamoring for network access, and Internet service providers (ISPs) were emerging to accommodate those interests, defining an entirely new industry in the process. Networks in places other than the U.S. had developed, along with interest in international connections. As the various new and existing entities pursued their goals, the complexity of connections and infrastructure grew.

Government agency networks interconnected at Federal Internet eXchange (FIX) points on both the east and west coasts. Commercial network organizations had formed the Commercial Internet eXchange (CIX) association, which built an interconnect point on the west coast. At the same time, ISPs around the world, particularly in Europe and Asia, had developed substantial infrastructures and connectivity. To begin sorting out the growing complexity, Sprint was appointed by NSFNET to be the International Connections Manager (ICM)—to provide connectivity between the backbone services in the U.S. and European and Asian networks. NSFNET was decommissioned in April 1995.

The Internet Today

The decommissioning of NSFNET had to be done in specific stages to ensure continuous connectivity to institutions and government agencies that used to be connected to the regional networks. Today's Internet structure is a move from a core network (NSFNET) to a more distributed architecture operated by commercial providers such as Sprint, MCI, BBN, and others connected via major network exchange points. Figure 1-2 illustrates the general form of the Internet today.

Figure 1-2 The general

structure of today's Internet.

The contemporary Internet is a collection of providers that have connection points called POP (point of presence) over multiple regions. Its collection of POPs and the way its POPs are interconnected form a provider's network. Customers are connected to providers via the POPs. Customers of providers can be providers themselves. Providers that have POPs throughout the U.S. are called national providers.

Providers that cover specific regions (regional providers) connect themselves to other providers at one or multiple points. To enable customers of one provider to reach customers of another provider, Network Access Points (NAPs) are defined as interconnection points. The term ISP is usually used when referring to anyone who provides service, whether directly to end users or to other providers. The term NSP (network service provider) is usually restricted to providers who have NSF funding to manage the Network Access Points, such as Sprint, Ameritech, and MFS. The term NSP, however, is also used more loosely to refer to any provider that connects to all the NAPs.

NSFNET Solicitations

NSFNET has supported data and research on networking needs since 1986. NSFNET also supported the goals of the High Performance Computing and Communications (HPCC) Program, which promoted leading-edge research and science programs. The National Research and Education Network (NREN) Program, which is a subdivision of the HPCC Program, called for Gigabit-per-second networking for research and education to be in place by the mid 1990s. All these needs, in addition to the April 1995 expiration deadline of the Cooperative Agreement for NSFNET Backbone Network Services, lead NSFNET to solicit for NSFNET services. This process is generally referred to as solicitation.

The first NSF solicitation, in 1987, lead to the NSFNET backbone upgrade to T3 links by the end of 1993. In 1992, NSF wanted to develop a follow-up solicitation that would accommodate and promote the role of commercial service providers and that would lay down the structure of a new and robust Internet model. At the same time, NSF would step back from the actual operation of the network and focus on research aspects and initiatives. The final NSF solicitation (NSF 93-52) was issued in May 1993.

The final solicitation included four separate projects for which proposals were invited:

- • Creating a set of Network Access Points

(NAPs) where major providers connect their networks and exchange

traffic.

- • Implementing a Route Arbiter (RA) project to facilitate the exchange of policies and addressing of multiple providers connected to the NAPs.

- • Finding a provider of a very high-speed Backbone Network Service (vBNS) for educational and governmental purposes.

- • Transitioning existing and/or realigned regional networks to support interregional connectivity (IRC) by connecting to NSPs that are connected to NAPs or by connecting directly to NAPs. Any NSP selected for this purpose must connect to at least three of the NAPs.

- • Implementing a Route Arbiter (RA) project to facilitate the exchange of policies and addressing of multiple providers connected to the NAPs.

Network Access Points

The solicitation for this project was to invite proposals from companies to implement and manage a specific number of NAPs where the vBNS and other appropriate networks may interconnect. These NAPs should enable regional networks, network service providers, and the U.S. research and education community to connect and exchange traffic with one another. They also should provide for the interconnection of networks in an environment that is not subject to the NSF Acceptable Use Policy. (This policy was put in place to restrict the use of the Internet for research and education.) Thus, general usage, including commercial usage, can go through the NAPs also.

What Is a NAP?

The NAP is defined as a high-speed network or switch to which a number of routers can be connected for the purpose of traffic exchange. NAPs must operate at speeds of at least 100 Mbps and must be able to be upgraded as required by demand and usage. The NAP could be as simple as an FDDI switch (100 Mbps) or an ATM switch (155 Mbps) passing traffic from one provider to the other.

The concept of the NAP is built on the FIX (Federal Internet eXchange) and the CIX (Commercial Internet eXchange), which are built around FDDI rings with attached Internet networks operating at speeds of up to 45 Mbps.

The traffic on the NAP should not be restricted to that which is in support of research and education. Networks connected to the NAP are permitted to exchange traffic without violating the use policies of any other networks interconnected to the NAP.

There are four NSF-awarded NAPs:

- • Sprint NAP—Pennsauken, NJ

- • PacBell NAP—San Francisco, CA

- • Ameritech Advanced Data Services (AADS) NAP—Chicago, IL

- • MFS Datanet (MAE-East) NAP—Washington, D.C.

- • PacBell NAP—San Francisco, CA

The NSFNET backbone service was physically connected to the Sprint NAP on September 13, 1994. It was physically connected to the PacBell NAP and Ameritech NAP in mid-October 1994 and early January 1995, respectively. The NSFNET backbone service was upgraded to the collocated FDDI offered by MFS on March 22, 1995.

Additional NAPs are being created around the world as providers keep finding the need to interconnect.

Networks attaching to NAPs must operate at speeds commensurate with the speeds of attached networks (1.5 Mbps or greater) and must be upgradable as required by demand, usage, and program goals. NAPs must be able to switch both IP and CLNP (ConnectionLess Networking Protocol). The requirements to switch CLNP packets and to implement IDRP-based (InterDomain Routing Protocol, ISO OSI Exterior Gateway Protocol) procedures may be waived depending on the overall level of service and the U.S. government's desire to foster the use of ISO OSI protocols.

NAP Manager Solicitation

A NAP manager should be appointed to each NAP with duties that include the following:

- • Establish and maintain the specified NAP

for connecting to vBNS and other appropriate networks.

- • Establish policies and fees for providers that want to connect to the NAP.

- • Propose NAP locations subject to the given general geographic locations.

- • Propose and establish procedures to work with personnel from other NAP managers (if any), the Route Arbiter, the vBNS provider, and regional and other attached networks, to resolve problems and to support end-to-end connectivity and quality of service for network users.

- • Develop reliability and security standards for the NAPs and procedures to ensure that these standards are met.

- • Specify and provide appropriate NAP accounting and statistics gathering and reporting capabilities.

- • Specify appropriate access procedures to the NAP for authorized personnel of connecting networks and ensure that these procedures are carried out.

- • Establish policies and fees for providers that want to connect to the NAP.

At the NAP

The current physical configuration of today's NAPs is a mixture of FDDI/ATM switches with different access methods, ranging from DS3 for dedicated and FR/ATM/SMDS for switched. Figure 1-3 shows a possible configuration, based on some contemporary NAPs. The routers could be managed either by the NSP or the NAP manager. Different configurations, fees, and policies are set by the NAP manager. Connections from different LATA (Local Access and Transport Area) are provided by Inter eXchange Carriers (IXC).

Figure 1-3 Typical NAP

physical infrastructure.

Federal Internet eXchange (FIX)

Due to the decommissioning of the NSFNET backbone, federal regional networks faced the problem of transitioning to the new infrastructure where they have to be connected to new NSPs. The Federal Networking Council (FNC) Engineering and Planning Group (FEPG) was responsible for making a recommendation on how to transition to the new NAP-NSP operational environment with minimal disruption to users, specifically in federal agency communications with the U.S. academic and research communities.

Existing Federal Internet eXchanges (FIX West and FIX East) were to be connected to the major NSPs (MCInet, Sprintlink, ANS). The FIX West backbone formerly was maintained at NASA Ames. Now it is connected to the major NSPs, and route servers were installed to peer with the federal agencies. The FIX East backbone formerly was maintained at SURA (College Park, MD). Now it is connected to the major NSPs and is also bridged to the MAE-East facility (Tyson's Corner, VA) of MFS.

Commercial Internet eXchange (CIX)

The CIX (pronounced Kix) is a nonprofit trade association of Public Data Internetwork Service Providers. The association promotes and encourages the development of the public data communications internetworking services industry in both national and international markets. The CIX provides a neutral forum to exchange ideas, information, and experimental projects among suppliers of internetworking services. Some benefits CIX provides its members include:

- • A neutral forum to develop consensus

positions on legislative and policy issues.

- • A fundamental agreement for all CIX members to interconnect with one another. No restriction exists on the type of traffic that can be routed between member networks.

- • No "settlements" nor any traffic-based charges between CIX member networks.

- • Access to all CIX member networks, greatly increasing the correspondents, files, databases, and information services available to them. Users gain a global reach in networking, increasing the value of their network connection.

- • A fundamental agreement for all CIX members to interconnect with one another. No restriction exists on the type of traffic that can be routed between member networks.

With increasing ISP connectivity to NAPs, the CIX becomes essential in the coordination of legislative issues between members. In fact, the role of the CIX for physical connectivity is not as important as its role in coordination between parties. With the existence of a number of other high bandwidth connection points such as the NAPs, the CIX plays a minor role in the connectivity game. ISPs who still rely on the CIX as their only physical connection to the Internet are still way behind.

On July 13, 1994, the CIX board voted to block traffic from ISPs who are not CIX members. CIX membership costs approximately $7,500 annually.

Significance of the NAPs for Routing

Although NAP connectivity is primarily something ISPs have to worry about, the level of redundancy and diversity of NAP connections affects traffic patterns and trajectories in the whole Internet. As such, the delays or speed of access caused by ISPs' interconnectivity affect the performance of everyone's Internet access. As you will see in the rest of this book, speed of access to the NAPs and the distance an ISP or a customer is from the NAP affects routing behaviors and traffic trajectories.

Route Arbiter Project

Another project for which NSF solicited services is the Route Arbiter (RA) project, which is charged with providing equitable treatment of the various network service providers with regard to routing administration. The RA will provide for a common database of route information to promote stability and manageability of networks.

Multiple providers connecting to the NAP have created a scalability issue because each provider will have to peer with all other providers to exchange routing and policy information. The RA project was developed to reduce the full peering mesh between all providers. Instead of peering among each other, providers will peer with a central system called a route server. The route server will maintain a database of all information needed for providers to set their routing policies. Figure 1-4 shows the physical connectivity and logical peering between a route server and various service providers.

Figure 1-4 Route server

handling of routing updates in relation to traffic routing.

The following are the major tasks of the RA per the NSFNET proposal:

- • Promote Internet routing stability and

manageability.

- • Establish and maintain network topology and policy databases by such means as exchanging routing information with and dynamically updating routing information from the attached Autonomous Systems (AS)1 using standard interdomain routing protocols such as BGP (Border Gateway Protocol) and IDRP (support for IP and CLNP).

1An Autonomous System is a collection of routers under the same administration and sharing a consistent policy.

- • Propose and establish procedures to work with personnel from the NAP manager(s), the vBNS provider, and regional and other attached networks to resolve problems and to support end-to-end connectivity and quality of service for network users.

- • Develop advanced routing technologies such as type of service and precedence routing, multicasting, bandwidth on demand, and bandwidth allocation services, in cooperation with the global Internet community.

- • Provide for simplified routing strategies, such as default routing, for attached networks.

- • Promote distributed operation and management of the Internet.

- • Establish and maintain network topology and policy databases by such means as exchanging routing information with and dynamically updating routing information from the attached Autonomous Systems (AS)1 using standard interdomain routing protocols such as BGP (Border Gateway Protocol) and IDRP (support for IP and CLNP).

Today, the RA project is a joint effort of Merit Network, Inc.[1], the University of Southern California Information Sciences Institute (ISI)[2], Cisco Systems, as a subcontractor to ISI, and the University of Michigan ROC, as a subcontractor to Merit.

The RA service is comprised of four projects:

- • Route server—The route server can

be as simple as a Sun workstation deployed at each NAP. The route

server exchanges routing information with the service provider

routers attached to the NAP. Individual routing policies

requirements (RIPE 181)2 [3] for

each provider are maintained. The route server itself does not

forward packets or perform any switching function. Those functions

are taken care of by the NAP's physical network.

2The RIPE language is covered in Appendix A.

The server(s) facilitate interconnections between ISPs by gathering routing information from each ISP, applying the ISP's predefined set of rules and policies, and then redistributing the processed routing information to each ISP. This process saves the routers from having to peer with all other routers, thus cutting down the number of peers from (n-1) to (1), where n is the number of routers.

In this configuration, the routers of the different providers concentrate on switching the traffic between one another and do relatively little filtering and applying of policies.- • Network management system—This software monitors the performance of the RS. Distributed rovers run at each RS and collect information such as performance statistics. The central network management station (CNMS) at the Merit Routing Operation Center queries the rovers and processes the information.

- • Routing Arbiter Database (RADB)3 [1]—This is one of several routing databases collectively known as the Internet Routing Registry (IRR). Policy routing in the Routing Arbiter Database is expressed by using RIPE-181 syntax developed by the RIPE Network Coordination Center (RCC). The Routing Arbiter Database was deployed in dual mode with the Policy Routing Database (PRDB). The PRDB had been used to configure the NSFNET's backbone routers since 1986. With the introduction of the RIPE-181 language, which provided better functionality in recording global routing policies, the PRDB was to be retired in 1995 for full RADB functionality.

3The RADB is covered in Appendix A.

- • Routing engineering team—This team works with the network providers to set up peering and to resolve network problems at the NAP. The team provides consultation on routing strategies, addressing plans, and other routing related issues.

- • Network management system—This software monitors the performance of the RS. Distributed rovers run at each RS and collect information such as performance statistics. The central network management station (CNMS) at the Merit Routing Operation Center queries the rovers and processes the information.

As you have already seen, the main parts of the Route Arbiter concept are the route server and the RADB. The practical and administrative goals of the RADB apply mainly to service providers connecting to the NAP. Configuring the correct information in the RADB is essential in setting the required routing policies, as explained in Appendix A, "RIPE-181." As a customer of a provider, you may never have to configure such language. What is important, though, is not the language itself but rather understanding the reasoning behind the policies being set. As you will see in this book, policies are the basis of routing behaviors and architectures.

On the other hand, the concept of a route server and peering with centralized routers is not restricted to providers and NAPs, and could be implemented in any architecture that needs it. As part of the implementation section of this book, the route server concept will come up as a means of creating a one-to-many relationship between peers.

The very high-speed Backbone Network Service (vBNS)

The very high-speed Backbone Network Service (vBNS) project was created to provide a specialized backbone service for the high-performance computing users of the major government-supported SuperComputer Centers (SCCs) and for the research community. The vBNS will continue the tradition that NSFNET has provided in this field. The vBNS will be connected to the NSFNET- specified NAPs. On April 24, 1995, MCI and NSF announced the launch of the vBNS.

MCI duties include the following:

- • Establish and maintain a 155 Mbps or

higher transit network that switches IP and CLNP, and connects to

all NAPs.

- • Establish a set of metrics to monitor and characterize network performance.

- • Subscribe to the policies of the NAP/RA manager.

- • Provide for multimedia services.

- • Participate in the enhancement of advanced routing technologies and propose enhancements in both speed and quality of service that is consistent with NSF customer requirements.

- • Establish a set of metrics to monitor and characterize network performance.

The five-year, $50-million agreement between MCI and NSFNET will tie together NSF's five major high performance communication centers:

- • Cornell Theory Center (CTC), in Ithaca,

New York

- • National Center for Atmospheric Research (NCAR) in Boulder, Colorado

- • National Center for SuperComputing Applications (NCSA) at the University of Illinois at Champaign

- • Pittsburgh SuperComputing Center (PSC)

- • San Diego SuperComputer Center (SDSC)

- • National Center for Atmospheric Research (NCAR) in Boulder, Colorado

The vBNS has been called the R & D lab for the 21st century. The use of advanced switching and fiber optic transmission technologies, Asynchronous Transfer Mode (ATM), and Synchronous Optical Netwok (SONET) will enable very high-speed, high-capacity voice and video signals to be integrated.

The NSF is already in the process of authorizing use of the vBNS for "meritorious" high-bandwidth applications, such as using super-computer modeling at NCAR to understand how and where icing occurs on aircraft. Other applications at NCSA consist of building computational models to simulate the workings of biological membranes and how cholesterol inserts into membranes.

The vBNS will be accessible to select application sites through four NAPs in New York, San Francisco, Chicago, and Washington, D.C. Figure 1-5 shows the geographical relationships between the centers and NAPs. The vBNS is mainly composed of OC3 /T3 (OC12 is in the process of being deployed) links connected via high-end systems, such as Cisco routers and Cisco ATM switches.

Figure 1-5 Overall map

illustrating vBNS geographical components.

The vBNS is a specialized network that emerged due to continuing needs for high-speed connections between members of the research and development community, one of the main charters of the NSFNET. Although the vBNS does not have any bearing on global routing behavior, the preceding brief overview is meant to give the reader background on how NSFNET covered all its bases before being decommissioned in 1995.

Moving the Regional Providers

As part of the NSFNET solicitation for transitioning to the new Internet architecture, NSF requested that regional networks (also called mid-level networks) start transitioning their connections from the NSFNET backbones to other providers.

Regional networks have been a part of NSFNET since its creation and have played a major role in the network connectivity of the research and education community. Regional network providers (RNPs) connect a broad base of client/member organizations (such as universities), providing them with multiple networking services and with Inter Regional Connectivity (IRC).

The anticipated duties of the Regional network providers per the NSF 93-52 program solicitation follow:

- • Provide for interregional connectivity by

such means as connecting to NSPs that are connected to NAPs and/or

by connecting to NAPs directly and making inter-NAP connectivity

arrangements with one or more NSPs.

- • Provide for innovative network information services for client/member organizations in cooperation with the InterNIC and the NSFNET Information Services Manager.

- • Propose and establish procedures to work with personnel from the NAP manager(s), the RA, the vBNS provider, and other regional and attached networks to resolve problems and to support end-to-end connectivity and quality of service for network users.

- • Provide services that promote broadening the base of network users within the research and communication community.

- • Provide for, possibly in cooperation with an NSP, high-bandwidth connections for client/member institutions that have meritorious high- bandwidth applications.

- • Provide for network connections to client/member organizations.

- • Provide for innovative network information services for client/member organizations in cooperation with the InterNIC and the NSFNET Information Services Manager.

In the process of moving the regionals from the NSFNET to new ISP connections, NSF suggested that the regional networks be connected either directly to the NAPs or to providers connected to the NAPs. During the transition, NSF supported, for one year, connection fees that would decrease and eventually cease (after the first term of the NAP Manager/RA Cooperative Agreement, which shall be no more than four years.)

Table1-1 lists some of the old NSFNET regional providers and their new respective providers under the current Internet environment. As you can see, most of the regional providers have shifted to either MCInet or Sprintlink. Moving the regional providers to the new Internet architecture in time for the April 1995 deadline was one of the major milestones that NSFNET had to achieve.

|

| |

|---|---|

| Old Regional Network | New Internet Provider |

|

| |

| Argone | CICnet |

| BARRnet | MCInet |

| CA*net | MCInet |

| CERFnet | CERFnet |

| CICnet | MCInet |

| Cornell Theory Ctr. | MCInet |

| CSUnet | MCInet |

| DARPA | ANSnet |

| JvNCnet | MCInet |

| MOREnet | Sprintlink |

| NEARnet | MCInet |

| NevadaNet | Sprintlink |

| NYSERnet | Sprintlink |

| SESQUINET | MCInet |

| SURAnet | MCInet |

| THEnet | MCInet |

| Westnet | Sprintlink |

|

| |

NSF Solicits NIS Managers

In addition to the four main projects relating to architectural aspects of the new Internet, NSF recognized that information services would be a critical component in the even more widespread, freewheeling network. As a result, a solicitation for one or more Network Information Services (NIS) managers for the NSFNET was proposed. This solicitation invites proposals for the following:

- • To extend and coordinate Directory and

Database and Information Services.

- • To provide registration services for non-military Internet networks. The Defense Information Systems Agency Network Information Center (DISA NIC) will continue to provide for the registration of military networks.

At the time of the solicitation, the domestic, non-military portion of the Internet included the NSFNET and other federally sponsored networks such as the NASA Science Internet (NSI) and Energy Sciences Network (ESnet). All these networks, as well as some other networks of the Internet, were related to the National Research and Education Network (NREN), which was defined in the President's fiscal 1992 budget. The NSF solicitation for Database Services, Information Services, and Registration services were needed to help the evolution of the NSFNET and the development of the NREN.

Network Information Services

At the time of the proposal, certain network information services were being offered by a variety of providers; some of these services included the following:

- • End-user information services were

provided by NSF Network Service Center (NNSC) operated by Bolt,

Beranek, and Newman (BBN). Other NSFNET end-user services were

provided by campus-level computing and networking organizations.

- • Information services for various federal agency backbone networks were provided by the sponsoring agencies. NSI information services, for example, were provided by NASA.

- • Internet registration services were provided by DISA NIC operated by Government Services, Inc. (GSI).

- • Information services for campus-level providers have been provided by NSFNET mid-level network organizations.

- • Information services for NSFNET mid-level network providers have been provided by Merit, Inc.

- • Information services for various federal agency backbone networks were provided by the sponsoring agencies. NSI information services, for example, were provided by NASA.

Under the new solicitation, NIS managers should provide services to end users and to campus and mid-level network service providers, and should coordinate with mid-level and other network organizations, such as with Merit, Inc.

Creation of the InterNIC

In response to NSF's solicitation for NIS managers, in January 1993 the InterNIC [4] was established as a collaborative project among AT&T, General Atomics, and Network Solutions, Inc. It was to be supported by three five-year cooperative agreements with the NSF. During the second year performance review, funding by the NSF to General Atomics stopped. AT&T was awarded the Database and Directory Services, and Network Solutions was awarded the Registration Services and the NIC Support Services.

Registration Services (RS)

The NIS manager will act in accordance to RFC 1174, which states the following:

The Internet System has employed a central Internet Assigned Numbers Authority (IANA) for the allocation and assignment of various numeric identifiers needed for the operation of the Internet. The IANA function is performed by the University of Southern California's Information Sciences Institute. The IANA has the discretionary authority to delegate portions of this responsibility and, with respect to numeric network and autonomous system identifiers, has lodged this responsibility with an Internet Registry (IR).

The NIS manager will either become the IR or a delegate registry authorized by the IR. The Internet registration services to be provided will include:

- • Network number assignment

- • Autonomous system number assignment

- • Domain name registration

- • Domain name server registration

- • Autonomous system number assignment

Today, NSI is providing assistance in registering networks, domains, AS numbers, and other entities to the Internet community via telephone, electronic mail, and U.S. postal mail. RS will work closely with domain administrators, network coordinators, ISPs, and other various users to register Internet domains, Autonomous System numbers, and networks.

The RS will provide databases and information servers such as WHOIS registry for domains, networks, AS numbers, and their associated Point Of Contacts (POCs). The RS also offers Gopher and Wide Area Information Server (WAIS) interfaces for retrieving information.

The documents distributed by the InterNIC registration services include templates, network information, and policies to request network numbers and register domain name servers.

Directory and Database Services

The implementation of this service should utilize distributed database and other advanced technologies. The NIS manager could coordinate this role with respect to other organizations that have created and maintained relevant directories and databases. AT&T is providing the following services under the NSF agreement.

- • Directory services (white pages):

This provides access to Internet White Pages information using X.500, WHOIS, and netfind systems.

The X.500 directory standard enables the creation of a single worldwide directory of information about various objects of interest—information about people, for example.

The WHOIS lookup service provides unified access to three Internet WHOIS servers for person and organization queries. It searches the InterNIC Directory and Database Services server for non-military domain and non-Point-Of-Contact data. The search for MIL (military) domain data is done via the DISA NIC server, and the POC data is done via the InterNIC Registration Services server.

Netfind is a simple Internet white pages directory search facility. Given the name of a user and a description of where the user works, the tool attempts to locate information about the Internet user.- • Database services:

This should include databases of communication documents such as Request For Comments (RFCs), For Your Information RFCs (FYI), Internet Drafts (IDs), Meeting Minutes, IETF Steering Committee (IESG) documents, and so on. This service could also contain databases maintained for other groups with a possible fee.

AT&T also offers a database service listing of public databases, which contains information of interest to the Internet community.

Access to database and directory services can be done via the Web at http://ds.internic.net/ds/dspgwp.html.- • Directory of directories:

This service points to other directories and databases such as those listed previously.

This is an index of pointers to resources, products, and services accessible through the Internet. It includes pointers to resources such as computing centers, network providers, information servers, white and yellow pages directories, library catalogs, and so on.

As part of this service, AT&T stores a listing of information resources, including type, description, how to access the resource, and other attributes. Information providers are given access to update and add to the database. The information can be accessed via different methods such as telnet, ftp, gopher, e-mail, and www. - • Database services:

NIC Support Services

The original solicitation for "Information Services" was granted to General Atomics in 1993 and taken away in February 1995. At that time, Network Solutions, Inc. took over the proposal, and it was renamed NIC Support Services.

The goal of this service is to provide a forum for the research and education community, Network Information Centers (NICs) staff, and the academic Internet community, within which the responsibilities, duties, and functions of the InterNIC may be defined. As of now, this service is divided into two components:

- • Info Scout Service:

NSI subcontracts to the University of Wisconsin, Madison for this service. The scout staff at the university and the NSI NIC support staff work together to serve both end-users and NICs in the higher-education community.- • NIC Support Service at NSI:

The definition of NICs refers to individuals or organizations within the research and education community who provide a wide range of support for people within their client base who use the Internet.

The focus of NSI is to provide an outreach program to the NIC community, soliciting input from the community on a regular basis and acting on the input by implementing new InterNIC services in support of NICs. - • NIC Support Service at NSI:

Other Internet Registries

Other Internet Registries (IR) were created outside the U.S.; these registries perform functions similar to those performed by the InterNIC in the U.S.

RIPE NCC (Reséaux IP Européens Network Coordination Center)

Created in 1989, RIPE[5] is a collaborative organization that consists of European Internet service providers. It aims to provide the necessary administration and coordination to enable the operation of the European Internet.

APNIC (Asia Pacific Network Information Center)

APNIC [6] is the IR for the Asia Pacific rim. It provides the IP registration and domain name services for that region. Created in 1993, APNIC started as a 10-month pilot project with the goal of providing Internet Registry functions and Routing Register functions (the RR function has not materialized to date). The pilot proved to be successful, and the APNIC is now in full operation serving as an IR.

Other Internet Registers are listed on the InternetNIC[4] home page.

Internetworking Routing Registries (IRR)

With the creation of a new breed of ISPs that want to interconnect with one another, offering the required connectivity while maintaining flexibility and control has become more challenging. Each provider has a set of rules, or policies, that describe what to accept and what to advertise to all other neighboring providers. Example policies include determining route filtering from a particular ISP and choosing the preferred path to a specific destination. The potential for the various policies from interconnected providers to conflict with and contradict one another is enormous.

To address these challenges, a neutral Routing Registry (RR) for each global domain had to be created. Each RR will maintain a database of routing policies created and updated by each service provider. The collection of these different databases is known as the Internetworking Routing Registries (IRR).

The role of the RR is not to determine policies, but rather to act as a repository for routing information and to perform consistency checking on the registered information with the other RRs. This should provide a globally consistent view of all policies used by providers all over the world.

Autonomous Systems (ASs) use exterior gateway protocols such as BGP to work with one another. In complex environments, there should be a formal way of describing and communicating policies between different ASs. Maintaining a huge database containing all registered policies for the whole world is cumbersome and difficult to maintain. This is why a more distributed approach was created. Each RR will maintain its own database and will have to coordinate extensively to achieve consistency between the different databases. Some of the different IRR databases in existence today are:

- • RIPE Routing Registry (European Internet

service providers)

- • MCI Routing Registry (MCI customers)

- • CA*net Routing Registry (CA*net customers)

- • ANS Routing Registry (ANS customers)

- • Routing Arbiter Database (all others)

- • JPRR Routing Registry (Japanese Internet service providers)

- • MCI Routing Registry (MCI customers)

Each of the preceding registries serves a limited number of customers except for the Routing Arbiter Database (RADB), which handles all requests not serviced by other registries. As mentioned earlier, the RADB is part of the Routing Arbiter (RA) project, which is a collaboration between Merit and ISI with subcontracts to Cisco Systems and the University of Michigan ROC.

Looking Ahead

The decommissioning of the NSFNET in April 1995 marked the beginning of a new era. The Internet today is a playground for hundreds and thousands of providers competing for market share. For many businesses and organizations, connecting their networks to the global Internet is no longer a luxury but a requirement for staying competitive.

The structure of the contemporary Internet has implications for service providers and their customers in terms of speed of access, reliability, and cost of use. Some of the questions organizations that want to connect to the Internet should ask are: Are providers—whether established or relatively new to the business—well-versed with routing behaviors and architectures? For that matter, how much do customers of providers need to know and do with respect to routing architecture? Do we really know what constitutes a stable network? Is the bandwidth of our access line all we need to worry about to have the "fastest" Internet connection? The next chapter is intended to help ISPs and their customers evaluate these questions in a basic way. Later chapters get into details of routing architecture.

Interdomain routing is fairly new to everybody and is evolving every day. The rest of this book builds upon this chapter's overview of the structure of the Internet in explaining and demonstrating current routing practices.